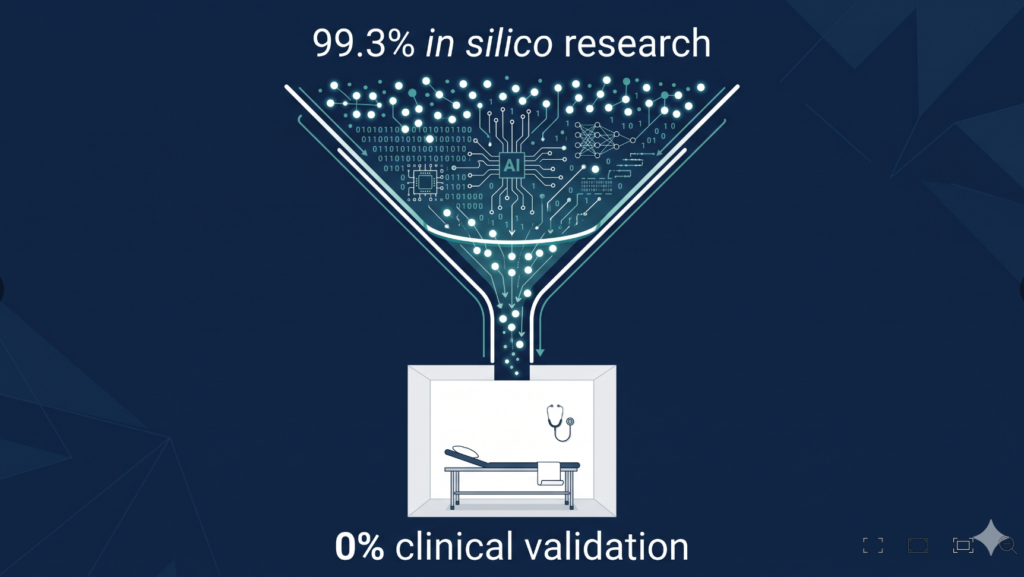

The AI implementation gap in otolaryngology is one of the most striking paradoxes in modern medicine. Here is a number worth sitting with: in a 2025 scoping review published in npj Digital Medicine, researchers analyzed the entire body of deep learning research in otolaryngology-head and neck surgery and found that 99.3% of studies were in silico — computer simulations and proof-of-concept models that never touched a real patient. The number of studies that completed clinical validation? Zero percent(Liu et al, NPJ Digit Med, 2025).

ENT is a specialty with every reason to benefit from AI. We deal in multimodal data: high-resolution endoscopy, audiometry waveforms, tympanometry, voice recordings, CT scans of the temporal bone. The signal is rich. The technology is ready. And yet, walk into an ENT clinic anywhere in the world today, and AI is, for practical purposes, absent.

This piece examines the AI implementation gap in otolaryngology — why it exists, where it might close first, and what it would actually take to get AI into the exam room.

The Numbers Don’t Lie: ENT Has an AI Chasm

The term “AI chasm” is borrowed from technology adoption theory, but it has taken on specific meaning in otolaryngology. Researchers from Stanford University and collaborating academic medical centers used it to describe the structural gap between the volume of AI research produced in ENT and the near-zero rate at which that research translates into tools clinicians actually use(Liu et al, NPJ Digit Med, 2025).

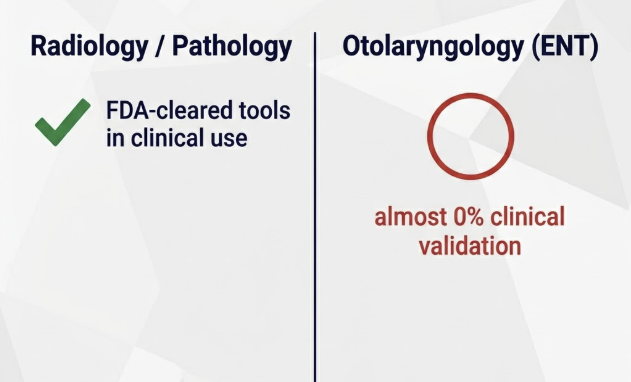

For context: in radiology and pathology, FDA-cleared AI tools are already embedded in clinical workflows. Radiologists use AI-assisted detection for pulmonary nodules and mammographic lesions as a routine second read. Pathologists use AI for whole-slide image analysis in cancer grading. These specialties had a head start — they operate in image-heavy, high-volume environments with standardized data formats — but they also committed to the hard work of prospective clinical validation.

What “In Silico” Actually Means — and Why It’s Not Enough

An in silico study trains and tests an AI model on historical datasets, often from a single institution, under controlled conditions. It answers the question: can this algorithm learn to recognize a pattern? It does not answer: does this tool improve patient outcomes when used by real clinicians in real time?

The gap between those two questions is enormous. A model that performs at 94% accuracy on a curated dataset can fail spectacularly when exposed to the variability of real clinical practice — different endoscope brands, different patient populations, different lighting in the OR. Clinical validation requires prospective trials, multi-center data, and regulatory scrutiny. That is expensive, slow, and structurally under-incentivized in academic medicine. In ENT, it has essentially not happened yet.

The Five Structural Barriers

A 2025 clinical commentary by Burmeister and colleagues identified the core obstacles preventing AI adoption in otolaryngology. They map cleanly onto five categories(Burmeister et al, SAGE Open Med, 2025).

1. No Reimbursement Pathway

Hospitals and clinics are businesses. Without insurance reimbursement for AI-assisted care — no CPT codes, no line item in the fee schedule — there is no financial rationale for a clinic to invest in AI infrastructure. As of 2026, major payers in the US and most other countries do not reimburse for AI-assisted ENT care. The tool may save a physician ten minutes; if neither the physician nor the hospital is paid for that efficiency gain, adoption stalls.

2. Workflow Integration Failure

The average ENT outpatient visit runs 15–20 minutes. Any AI tool that adds cognitive burden, requires separate logins, or generates output the physician must re-interpret before acting will be abandoned within weeks of deployment. ENT data is also deeply siloed: endoscopy lives in one system, audiometry in another, imaging in PACS. Building AI that synthesizes across these silos requires interoperability work that most EHR vendors have not yet done for specialty clinics.

3. Regulatory and Medico-Legal Vacuum

In a recent survey of pediatric otolaryngology providers, 56.8% reported fear of legal liability and 66.7% reported a lack of institutional guidelines as major barriers to AI adoption (Sattar Othman et al, J Otolaryngol Head Neck Surg, 2026). This is not irrational caution. If an AI tool contributes to a missed diagnosis, the liability chain — physician, hospital, software vendor — is legally uncharted territory in most jurisdictions. Until regulators provide clear guidance and professional societies publish practice standards, many physicians will rationally choose to wait.

4. Data Scarcity in ENT Subspecialties

ENT encompasses dramatically different clinical domains: cochlear implant programming, vestibular diagnostics, head and neck oncology, rhinology, pediatric airway and so on. Each requires its own dataset, its own outcome metrics, its own validation framework. The subspecialty volumes are often too small for the large training datasets AI requires. A single academic center may perform 50 cochlear implant surgeries per year; building a training dataset for CI mapping AI requires consortia-level data sharing that has yet to be organized in ENT.

5. Missing Subspecialty-Specific Validation

Most successful medical AI has been validated in high-volume domains with standardized inputs: chest X-rays, retinal photographs, skin lesion images. ENT lacks equivalent standardization. Laryngoscopy technique varies by surgeon. Audiometry protocols differ across clinics. Until ENT-specific reporting standards and validation frameworks exist — the equivalent of CONSORT-AI for otolaryngology — industry will not invest in the subspecialty-level validation studies the field needs.

Where AI Is Actually Working in ENT Today

The barriers above are real, but they are not universal. In a few narrow, well-defined domains, AI tools have shown genuine clinical promise — and in some cases, early deployment (Ramya et al, Egypt J Otolaryngol, 2026).

In laryngeal endoscopy, the evidence base is clearest. A systematic review and meta-analysis of 11 studies found AI models achieved pooled sensitivity of 0.91 and specificity of 0.97 for identifying healthy laryngeal tissue, with overall accuracy ranging from 0.806 to 0.997 (Żurek et al, J Clin Med, 2022).

The most clinically significant milestone to date arrived in 2026. The first worldwide multicenter external validation study demonstrated that an AI diagnostic classification model for laryngoscopy images was statistically noninferior in accuracy to otolaryngologists and expert laryngologists — and superior to general practitioners and GPT-4o(Sampieri et al, Otolaryngol Head Neck Surg, 2026). A prospective multicenter clinical trial of that same model is now underway.

These examples share a common profile: well-defined input data, a binary or near-binary clinical task, and an existing standard of care against which AI performance can be benchmarked. They are the right place to start. They are not yet the whole picture.

What Needs to Change: A Clinician’s Roadmap

Closing the AI implementation gap in ENT will require action at multiple levels simultaneously.

At the research level, the field needs subspecialty-driven validation consortia — multi-center collaborations designed specifically to generate the prospective clinical trial data that single institutions cannot produce alone. AAO-HNS and equivalent professional bodies in other countries are positioned to coordinate this, but have not yet done so at meaningful scale (Ayoub et al, Otolaryngol Head Neck Surg, 2025).

At the regulatory level, a tiered fast-track pathway for low-risk, decision-support AI tools — analogous to the FDA’s 510(k) process for devices — would allow validated tools to reach clinical deployment faster without compromising patient safety oversight. The key distinction is between tools that recommend (decision support) and tools that act (autonomous diagnosis); the former can be regulated more permissively.

At the clinical education level, AI literacy needs to enter ENT residency training. Physicians who understand how models are trained, what their failure modes look like, and how to critically evaluate a vendor’s validation claim will make better adoption decisions than those who either uncritically accept or reflexively reject AI tools.

Dr. Hong’s Take: The View From the Exam Room

Clinical Perspective

Honestly, I have yet to directly benefit from an AI tool in my clinic. Not because I doubt the technology — I follow the research closely and believe the potential is real. The obstacle is something more fundamental: in Korea, there is no nationally recognized AI tool for clinical ENT practice, and no formal government-level guidance that even signals it is safe to incorporate AI into direct patient care. Without that baseline legitimacy, using an AI tool in a patient encounter feels professionally exposed, regardless of how promising the underlying evidence may be.

Reimbursement reform is a distant horizon. But I think a more achievable near-term milestone would be national-level acknowledgment — some clear signal from regulators or professional bodies — that AI adoption in frontline clinical specialties is worth supporting. This matters especially for patient-facing specialties like ENT, internal medicine, or family practice, where the physician-patient relationship is central and liability concerns are acute. Radiology and pathology already operate in image-centric, data-heavy environments that make AI integration more natural; the rest of clinical medicine needs its own roadmap.

That is part of why CuriousMD exists. One of my goals for this blog is to translate ENT-specific AI research into plain language for a general audience — and in doing so, to contribute, however incrementally, to a cultural and policy environment where AI in the exam room is seen not as a liability, but as a legitimate tool in the hands of a qualified physician. If more patients understand what AI can and cannot do in ENT, and more policymakers understand what is blocking its adoption, we move the conversation forward in a way that journal articles alone cannot.

Key Takeaways

- As of 2025, 99.3% of AI studies in otolaryngology are proof-of-concept models; 0% have completed prospective clinical validation.

- The five core barriers to AI adoption in ENT are: no reimbursement, workflow mismatch, regulatory uncertainty, data scarcity, and absent subspecialty-specific validation.

- AI shows the clearest early-stage evidence in laryngeal endoscopy: a 2026 multicenter study confirmed an AI classification model was noninferior to specialist laryngologists — but it has not yet scaled to routine clinical deployment.

- Closing the gap requires multi-center validation consortia, a tiered regulatory pathway, and AI literacy in ENT residency training.

- National-level policy signals — even short of full reimbursement — would meaningfully accelerate ENT physician adoption of AI tools in patient-facing practice.

Frequently Asked Questions

Why is AI adoption so slow in ENT clinics?

The primary reasons are structural: there is no reimbursement pathway for AI-assisted ENT care, most AI tools are not integrated into clinical workflows, regulatory guidance is absent in most countries, and the field lacks the prospective clinical validation studies that would justify widespread adoption. These barriers reinforce each other — without reimbursement, there is no commercial incentive to fund clinical trials; without clinical trials, there is no evidence base to drive reimbursement.

What is the “AI chasm” in otolaryngology?

The AI chasm refers to the gap between the high volume of AI research published about ENT — most of it technically sophisticated and promising — and the near-zero rate at which that research produces tools that reach clinical deployment. A 2025 scoping review in npj Digital Medicine found that of all deep learning studies in otolaryngology-head and neck surgery, exactly 0% had completed clinical validation.

Has AI been proven effective for ENT diagnosis?

In laryngeal endoscopy, yes — with important caveats. A systematic review of 11 studies found AI models achieved pooled sensitivity of 0.91 and specificity of 0.97 for identifying laryngeal tissue (Żurek et al, J Clin Med, 2022), and a 2026 multicenter validation study confirmed an AI classification model was noninferior to specialist laryngologists(Sampieri et al, Otolaryngol Head Neck Surg, 2026). However, a prospective randomized clinical trial demonstrating improved patient outcomes — the standard required before routine clinical adoption — has not been completed in this or any other ENT domain.

How can ENT physicians start engaging with AI today?

The most defensible starting points are low-risk, decision-support applications: AI-assisted audiometry review, ambient documentation tools (AI scribes) that reduce administrative burden without affecting diagnosis, and participation in clinical trials testing AI-aided endoscopic detection. Physicians should also engage with their professional societies to push for AI validation standards and reimbursement advocacy.

Joonpyo Hong, MD is a board-certified otolaryngologist practicing in Korea. This article reflects his clinical interpretation of published research and does not constitute individual medical advice.

References

- Burmeister JR, Dimock E, Haupert M, Zazay I. Bridging the AI implementation gap in otolaryngology: A clinical commentary. SAGE Open Med. 2025;13:20552076251396981.

- Liu GS, Fereydooni S, Lee MC, Polkampally S, Huynh J, Kuchibhotla S, Shah MM, Ayoub NF, Capasso R, Chang MT, Doyle PC, Holsinger FC, Patel ZM, Pepper JP, Sung CK, Creighton FX, Blevins NH, Stankovic KM. Scoping review of deep learning research illuminates artificial intelligence chasm in otolaryngology-head and neck surgery. NPJ Digit Med. 2025;8(1):265.

- Ayoub NF, Rameau A, Brenner MJ, Bur AM, Ator GA, Briggs SE, Takashima M, Stankovic KM; AAO-HNS Artificial Intelligence Task Force. American Academy of Otolaryngology-Head and Neck Surgery (AAO-HNS) Report on Artificial Intelligence. Otolaryngol Head Neck Surg. 2025;172(2):734-743.

- Ramya K, Manoj Kumar N, Sowmya S, Channaveeradevaru C. Integration of artificial intelligence into ENT practice: a comparative study of real time clinical and operative scenarios. Egypt J Otolaryngol. 2026;42(1).

- Żurek M, Jasak K, Niemczyk K, Rzepakowska A. Artificial intelligence in laryngeal endoscopy: Systematic review and meta-analysis. J Clin Med. 2022;11(10):2752.

- Sampieri C, Mora F, Peretti G, Larrosa M, Vilaseca I, Avilés-Jurado FX, Ioppi A, Bellini E, Alegre B, Ruiz-Sevilla L, Srivastava R, Sakellaridis AC, Razou A, Kotsis GP, Moccia S, Mattos LS, Baldini C. Multicenter clinical validation of an artificial intelligence diagnostic classification model for laryngoscopy images. Otolaryngol Head Neck Surg. 2026;174(4):1049-1058.

- Sattar Othman M, Hu K, Davidson J, Kirubalingam K, Graham ME, Coyle P, Bhargava EK, You P. The utilization of artificial intelligence by pediatric otolaryngology surgeons in professional practice. J Otolaryngol Head Neck Surg. 2026;55:19160216251411838.