AI cochlear implant speech prediction — the ability to forecast a child’s language outcomes before surgery — has reached 92% accuracy in a landmark 2026 JAMA study. For parents facing this decision, the question has always been the same: Will my child be able to speak?

For decades, we have answered that question with educated estimates — age at implantation, residual hearing, family support, access to therapy. These factors matter, but they are imprecise. Two children with nearly identical profiles can end up with very different language outcomes. A new study published in JAMA Otolaryngology–Head & Neck Surgery suggests we may now have a far better tool: an artificial intelligence model that reads a pre-surgical brain MRI and predicts language outcomes with over 92% accuracy (Wang et al., JAMA Otolaryngol Head Neck Surg, 2026). Here is what the research shows — and what it means for families considering cochlear implant surgery.

What the Study Found

Researchers from Northwestern University, Lurie Children’s Hospital of Chicago, the University of Melbourne, and the Chinese University of Hong Kong enrolled 278 children with bilateral sensorineural hearing loss who received cochlear implants across three clinical centers. The children came from English-, Spanish-, and Cantonese-speaking families, and data were collected from July 2009 to March 2022 — a remarkably diverse and international dataset.

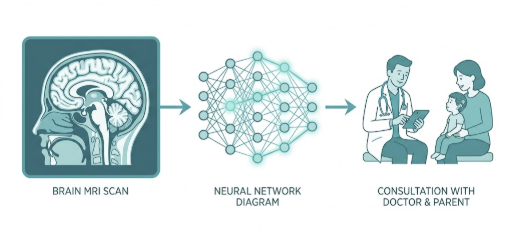

The team trained two types of models on each child’s pre-surgical brain MRI: a conventional machine learning algorithm and a deep transfer learning (DTL) model. The goal was a binary classification — predict whether a child would be a high or low language improver after implantation.

The results were striking:

| Metric | DTL Fusion Model | Best Traditional ML (Ridge) |

|---|---|---|

| Accuracy | 92.39% | 62.14% |

| Sensitivity | 91.22% | 55.72% |

| Specificity | 93.56% | 68.57% |

| AUC | 0.98 | 0.62 |

For context, a model using only traditional clinical variables — age at implant, residual hearing, and related measures — achieved just 53.57% accuracy, barely above chance. The DTL fusion model, which combined neuroimaging with clinical data, outperformed both baselines by a wide margin across all metrics.

The DTL fusion model, which combined neuroimaging with clinical data, outperformed both baselines — marking a new benchmark for AI cochlear implant speech prediction in a multicenter setting.

How Does the AI Actually Work?

Understanding the mechanism matters, because it shapes how clinicians and families should think about this tool.

Step 1 — Pre-Surgical Brain MRI

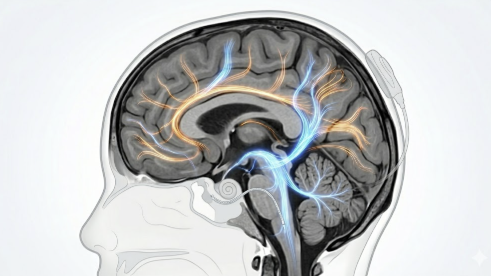

The model’s input is a T1-weighted structural MRI of the brain, taken before implant surgery. Standard pre-cochlear implant evaluation already includes temporal bone CT and internal auditory canal (IAC) MRI — a protocol that encompasses T1-weighted brain imaging. The AI does not require any new type of scan; it extracts predictive language information from imaging families are already undergoing as part of routine surgical workup. The scan captures anatomical details of brain structures involved in auditory processing and language development, including features of the auditory cortex.

Step 2 — Deep Transfer Learning

Deep transfer learning is a type of AI that begins with knowledge learned from a large, general dataset and fine-tunes itself on a smaller, task-specific dataset. Think of it like a radiologist who trained on tens of thousands of brain scans across all age groups and conditions, then specialized in pediatric cochlear implant candidates. The “bilinear attention” mechanism in this particular model focuses computational resources on the brain regions most relevant to predicting language outcomes — filtering signal from noise more effectively than conventional algorithms.

Step 3 — Predict-to-Prescribe

Once the model classifies a child as a high or low likely language improver, the clinical response changes. Children flagged as lower-probability improvers can be enrolled in earlier, more intensive speech therapy before and after surgery. Children on the higher-probability end can receive standard post-implant protocols with better-grounded reassurance for their families. The study authors describe this as a “predict-to-prescribe” approach — using the prediction not just for prognostication, but to actively shape the intervention plan.

A Clinical Perspective

It is striking to see AI models achieving such measurable results in predicting language outcomes for cochlear implant recipients. For a long time, we relied on indirect markers — age at implant, residual hearing, family engagement — that, while clinically useful, struggled to account for the full complexity of language readiness. Variables like the duration of auditory deprivation, pre-surgical brain development, and socioeconomic support all matter, yet weighting them consistently across individual patients has remained difficult. Forecasting a child’s specific language trajectory was long considered too uncertain to offer with confidence.

What I can say from the published data: the current standard of care relies heavily on indirect markers. Age at implantation is our most robust predictor — earlier is consistently better, and most guidelines recommend surgery by 12 months of age for children with profound bilateral hearing loss. But age alone does not explain why two children implanted at the same age can diverge significantly in their language trajectory.

This model proposes something more direct: look at the brain itself, before surgery, and ask what it is ready to do. Brain structure reflects auditory cortex maturation, and maturation varies between children even at the same chronological age. An objective, MRI-based risk score could help ENTs move from population-level estimates to individual-level counseling — a meaningful clinical shift.

However, the critical caveat is that this model is not yet in clinical use. It requires prospective validation in a new cohort and regulatory review before any center can offer it to patients. Families should not expect to request this AI analysis at their next appointment. But the research direction is clear, and the groundwork is being laid.

Limitations and What Comes Next

Any honest reading of this study requires acknowledging its constraints. The sample of 278 children, while multicenter and multilingual, is still relatively modest for a predictive AI model. The study design was retrospective — the AI was trained and tested on data already collected, not on prospective new patients. Performance in real-world clinical settings, where data quality and scan protocols vary, may differ from controlled research conditions.

The model also produces a binary classification — high versus low improver — rather than a continuous prediction or milestone timeline. It cannot yet tell a family that their child will reach age-appropriate language by a specific year. Future research may address whether AI can also guide post-implant therapy programming, auditory-verbal therapy intensity, or school placement decisions.

A 2025 systematic review in npj Digital Medicine found that imaging-based studies consistently demonstrated high predictive accuracy for language and speech perception outcomes in cochlear implant recipients, outperforming non-neural approaches (Nair et al., npj Digit Med, 2025). Wang et al. provide the most rigorous multicenter demonstration of this principle to date — and the first to show that performance holds across languages and continents.

Key Takeaways

- A deep transfer learning model predicted cochlear implant language outcomes in children with 92.39% accuracy, 91.22% sensitivity, and 93.56% specificity — compared to 62.14% for the best traditional ML approach.

- The AI model was validated across three languages (English, Spanish, Cantonese) and three countries — supporting broad clinical applicability.

- The model uses a T1-weighted brain MRI already collected at major cochlear implant centers; no additional scan is required.

- The “predict-to-prescribe” approach means AI risk scores can directly inform therapy planning — not just prognosis.

- The AI does not replace the ENT — it provides objective, reproducible data to support individualized counseling.

- This model is not yet clinically available. Prospective validation and regulatory review are required before clinical use.

FAQ

Can AI currently predict my child’s speech outcomes after cochlear implant surgery?

A 2026 JAMA study showed AI can predict whether a child is likely to be a high or low language improver with 92.39% accuracy, using pre-surgical brain MRI data. However, this model is currently in the research phase and is not yet available as a clinical tool. Your ENT’s assessment remains the foundation of any implant decision.

How does the AI use an MRI to predict language development?

The deep transfer learning model analyzes structural brain features visible on a pre-implant MRI — specifically patterns linked to auditory cortex development. Its bilinear attention mechanism focuses on the most predictive regions of the brain, allowing it to classify children as likely high or low language improvers before surgery occurs.

What factors currently affect cochlear implant success in children?

The strongest traditional predictors are age at implantation, degree of residual hearing before surgery, family engagement, and access to consistent speech therapy. Earlier implantation — ideally by 12 months — allows the brain’s critical language window to be used more fully. AI-based MRI analysis may eventually add a more direct, neurological layer to this assessment.

When should a child get a cochlear implant for best language outcomes?

Current guidelines recommend cochlear implant surgery by 12 months of age for children with congenital profound bilateral sensorineural hearing loss. The earlier the auditory cortex receives sound input, the more plastic the brain remains for language development. Timing is one of the most evidence-based decisions in pediatric cochlear implant care.

Will this AI tool be available to patients soon?

Prospective validation and regulatory clearance are required before this model enters clinical practice. Research timelines vary, but given the strength of these results, further multicenter validation studies are likely underway. Ask your ENT specialist about emerging pre-implant evaluation tools as this field develops.

Joonpyo Hong, MD is a board-certified otolaryngologist practicing in Korea. This article reflects his clinical interpretation of published research and does not constitute individual medical advice.

References

- Wang Y, Yuan D, Dettman S, et al. Forecasting Spoken Language Development in Children With Cochlear Implants Using Preimplant Magnetic Resonance Imaging. JAMA Otolaryngol Head Neck Surg. 2026;152(3):232-241.

- Nair APS, Mishra SK, Alba Diaz PA. A systematic review of machine learning approaches in cochlear implant outcomes. npj Digit Med. 2025;8:411.